RALPH Loop: Building Self-Improving AI Systems WITHOUT Claude

Posted May 2, 2026 by Gowri Shankar ‐ 15 min read

Somewhere between “this agent will change everything” and “why is this output still confidently wrong,” it hit me—mid-debug, staring at a beautifully orchestrated multi-agent system that looked impressive on architecture diagrams and completely fell apart in practice. Like every builder with a half-charged laptop and an overconfident belief in “just one more agent,” I did what we all do: blamed the model. Maybe switch to Claude, maybe wait for the next breakthrough from Anthropic, maybe add more layers because clearly the problem was lack of intelligence. It wasn’t. The system wasn’t failing because it couldn’t think... it was failing because it never got the chance to think again. We’ve quietly optimized for first-pass answers in a world where the real strength of these models shows up in reflection, critique, and iteration. What I needed wasn’t a better model or a more elaborate agent hierarchy; it was a loop... a simple, almost embarrassingly obvious one... that forces the system to generate, question itself, and improve before anyone trusts the output. That loop is what I now call the RALPH Loop, and this post is about why it works, how to build it from scratch, and why it might matter more than whatever model release you’re waiting for next.

Foundation

The Core Idea

At its core, the RALPH Loop is not a framework, not a model feature, and definitely not something exclusive to Claude or Anthropic. It’s a way of structuring how an AI system thinks over time.

Most AI workflows today look like this:

You ask a question, the model responds, and you move on. Maybe you tweak the prompt, maybe you switch models… but fundamentally, you’re still relying on a single pass of reasoning.

The RALPH Loop challenges that assumption.

Instead of treating the first answer as final, it introduces a simple idea:

What if the system had to review itself before you ever see the result?

That’s it. No magic.

The loop breaks the process into a few deliberate stages:

- Generate an answer

- Critique that answer

- Judge whether it’s good enough

- If not, improve it and try again

What you end up with is not just an output, but a process of refinement.

And that distinction matters more than it sounds.

Because the moment you shift from:

“Get me the answer”

to:

“Work towards a better answer”

you stop building prompt pipelines…

…and start building systems that can think, check, and improve.

That’s the real idea behind the RALPH Loop.

Why This Works (Mental Model)

This is the part most people skip… and it’s exactly why their systems don’t improve.

The RALPH Loop works because it aligns with how these models actually behave, not how we wish they behaved. There’s a subtle but important truth here: LLMs are not designed to be perfect on the first attempt. They’re probabilistic, pattern-matching systems operating under uncertainty. So when you ask for a “final answer” in one shot, you’re essentially freezing the process at its weakest point… the initial guess.

But something interesting happens when you let the model look at its own output.

On the first pass, the model is focused on producing. It’s trying to be helpful, coherent, and complete… but it’s also guessing, filling gaps, smoothing over uncertainty. This is where you see generic phrasing, missed edge cases, or shallow reasoning.

On the second pass, when you ask it to critique, the mode of thinking shifts entirely. It’s no longer trying to generate… it’s trying to evaluate. And LLMs are surprisingly good at this. They can spot inconsistencies, identify weak arguments, and call out what’s missing with far more precision than they can produce it the first time.

Then comes the third step… refinement. Now the model has context: not just the original problem, but also a structured understanding of what went wrong. This is where the output starts to sharpen. It becomes more specific, more grounded, and more useful.

If you step back, this looks very familiar.

It’s not an AI trick. It’s just a thinking process we already trust:

- A writer drafts.

- An editor critiques.

- A reviewer decides if it’s good enough.

We don’t expect the first draft of anything important to be perfect. We expect it to go through iteration, feedback, and improvement. The RALPH Loop simply brings that same discipline into AI systems.

And once you see it this way, the flaw in most “one-shot” AI pipelines becomes obvious.

They don’t fail because the model is weak.

They fail because the system never lets the model think beyond its first draft.

The System

The 3-Agent Model

Once you accept that systems need to think in loops, the architecture becomes surprisingly simple.

At the core, you only need three roles:

The Generator optimizes for breadth. It produces a solid first draft… covering the problem space without worrying about being perfect. Its job is to move fast and make something tangible.

The Critic brings depth through destruction & remediation. It doesn’t just point out issues… it identifies gaps, weak reasoning, and missing context. More importantly, this is where remediation begins. The critic doesn’t stop at “what’s wrong”… it guides how to fix it, turning feedback into actionable improvements.

The Judge enforces control. It evaluates the output, assigns a score, and decides whether the system should accept the result or loop again. This is what prevents both premature exits and endless iteration.

That’s the system.

Not a collection of agents with overlapping responsibilities—but a clear, repeatable thinking pattern that you can apply across stages.

👉 Important:

“You don’t need 10 agents”

From Concept to System

Up to this point, the RALPH Loop sounds almost… too simple. And that’s exactly where most implementations fall apart.

Because the moment you move from idea to code, the default approach looks like this:

pass a prompt → get a string → pass it to the next step → repeat

Everything becomes:

str → str → str

It works—for a demo.

But the cracks show up quickly.

There’s no structure to what the system is producing. The critic returns a blob of text, the judge responds with another blob, and somewhere in between you’re trying to “parse intent” out of paragraphs. Debugging becomes guesswork. You don’t know whether the issue is in generation, critique, or evaluation… you just know something feels off.

Worse, the system has no memory of what it’s doing. There’s no clear contract between stages, no way to track what improved across iterations, and no reliable way to enforce consistency.

This is the hidden problem with most agent pipelines:

They look sophisticated, but internally they’re just passing around strings.

If you want this to work beyond a toy example, you need to treat it like a real system.

That means introducing structure.

Not optional structure. Not “we’ll clean it up later” structure. But explicit contracts between each stage of the loop.

This is where strong typing comes in.

Because the moment you move from:

“Here’s some text”

to:

“Here’s a defined object with fields, expectations, and constraints”

everything changes.

- You can validate outputs

- You can log and inspect each stage

- You can measure quality across iterations

- You can debug failures without reading paragraphs of text

And most importantly:

You turn a prompt chain into a system you can reason about

In the next section, we’ll define these contracts explicitly using Pydantic… and that’s where the RALPH Loop starts to feel less like a pattern… and more like real engineering.

Strong Typing with Pydantic (The Real Differentiator)

This is where most “agent systems” quietly fall apart… and where yours doesn’t have to.

If your Generator, Critic, and Judge are just passing around strings, you don’t really have a system. You have a loosely connected set of prompts hoping to behave. And hope is not a strategy.

The moment you introduce strong typing, everything changes.

Instead of:

“Here’s some text, figure it out”

you move to:

“Here’s a well-defined contract… adhere to it”

Let’s make that concrete.

from pydantic import BaseModel

from typing import List

class GeneratedOutput(BaseModel):

content: str

assumptions: List[str]

class Critique(BaseModel):

issues: List[str]

severity: List[int] # 1–5 scale

suggestions: List[str]

class Judgement(BaseModel):

score: float # 0–1

decision: str # "accept" | "revise"

reasoning: str

Now each stage in your loop is no longer guessing what it received… it knows.

- The Generator must produce structured content and explicitly state assumptions

- The Critic must return concrete issues, not vague feedback

- The Judge must quantify quality and make a decision

You’ve turned implicit behavior into enforced contracts.

Why This Matters

This isn’t just about cleaner code. It fundamentally upgrades your system in three ways:

1. Observability You can now inspect every stage with precision.

- What issues were found?

- Did severity reduce across iterations?

- Why did the judge reject?

No more reading paragraphs trying to infer what went wrong.

2. Reliability You can validate outputs at runtime.

- Missing fields? Fail fast.

- Invalid structure? Retry.

Instead of silent failures, you get controlled behavior.

3. Composability This is where it gets powerful.

Once everything is typed:

- You can plug stages into different pipelines

- Reuse critics across domains

- Swap judges without breaking the system

You’re no longer chaining prompts… you’re building modular components.

Here’s the real shift:

Without typing → you’re orchestrating text

With typing → you’re orchestrating state

And systems built on state are the ones you can scale, debug, and trust.

This is the point where the RALPH Loop stops being a clever idea… and starts looking like something you can actually ship.

Core Loop Logic (The Engine)

This is the part where it all comes together… and also where most people expect unnecessary complexity.

There isn’t any.

At its core, the RALPH Loop is just a controlled iteration with three things:

- iteration control (don’t loop forever)

- threshold-based exit (know when to stop)

- state passing (carry context forward)

That’s it.

MAX_ITERATIONS = 3

THRESHOLD = 0.8

def ralph_loop(input_prompt: str):

iteration = 0

current_output = None

while iteration < MAX_ITERATIONS:

# Step 1: Generate

generated = generator(input_prompt, current_output)

# Step 2: Critique

critique = critic(generated)

# Step 3: Judge

judgement = judge(generated, critique)

# Exit condition

if judgement.decision == "accept" and judgement.score >= THRESHOLD:

return generated

# Step 4: Remediate (guided by critique)

current_output = remediate(generated, critique)

iteration += 1

return current_output

If you strip away the prompts and models, what you’re left with is just this:

The iteration control ensures you don’t chase perfection endlessly.

The threshold gives you a measurable definition of “good enough.”

And the state (current_output) ensures each loop is actually building on the previous one… not starting from scratch.

That last part is subtle, but critical.

Without state, you’re just rerunning prompts. With state, you’re refining thinking over time.

And that’s the “aha” moment.

Not:

“This is a complex agent system”

But:

“This is just a loop… done right.”

Remediation Strategy (The Part Everyone Handwaves)

This is where most systems quietly fail.

You’ll often see remediation implemented as:

“Rewrite this better”

It sounds reasonable. It almost never works.

Why? Because naive rewriting treats the entire output as broken. The model throws everything away and starts fresh… usually replacing specific, imperfect content with something more generic but “cleaner.” You lose signal, gain fluff, and convince yourself it improved because it reads smoother.

It didn’t. It just got safer.

Real remediation is not rewriting. It’s targeted correction.

The goal is simple:

Fix what’s wrong. Preserve what’s right.

That requires two things:

- Precise critique (what exactly is broken)

- Constrained improvement (what exactly should change)

Why Naive Rewriting Fails

- It ignores the critic’s specificity

- It resets useful context

- It optimizes for fluency, not correctness

- It introduces new errors while “fixing” old ones

You end up in loops that look productive but don’t actually converge.

What Good Remediation Looks Like

A strong remediation step should:

- Address each issue explicitly

- Retain valid sections of the original output

- Improve depth where needed (not everywhere)

- Avoid stylistic rewrites unless necessary

In other words, it should behave less like a writer… and more like an editor with a red pen.

Designing the Prompt (This Matters More Than You Think)

Your remediation prompt should force discipline. Something along these lines:

def remediate(generated, critique):

prompt = f"""

You are improving an existing output based on a critique.

Original Output:

{generated.content}

Identified Issues:

{critique.issues}

Suggested Fixes:

{critique.suggestions}

Instructions:

- Fix each issue explicitly

- Do NOT rewrite the entire response

- Preserve correct and useful parts

- Improve clarity and depth only where needed

- Avoid introducing new information unless required

Return the improved version.

"""

return generator(prompt)

Notice what this does:

- It anchors the model to the original output

- It forces alignment with the critique

- It prevents unnecessary rewriting

The Real Shift

Without remediation:

You’re just looping generation

With proper remediation:

You’re converging on quality

And that’s the difference between:

- a system that changes output

- and a system that actually improves output

Most blogs skip this because it’s subtle.

But in practice, this is where the RALPH Loop either becomes powerful… or quietly degenerates into expensive rephrasing.

Judge & Scoring System

This is where the loop stops being a clever pattern… and starts behaving like a system you can trust.

Without a judge, you’re just iterating blindly. With a weak judge, you’re iterating confidently in the wrong direction.

The Judge introduces three things:

- measurement (how good is this?)

- decision (do we accept or loop?)

- control (when do we stop?)

Defining Scoring Dimensions

If your judge only asks “is this good?”, you’ve already lost.

You need clear dimensions… so the system knows what good means.

Typical ones:

- Completeness

- Correctness

- Clarity

- Depth

- Actionability

Now let’s make this explicit using a typed scoring model.

from pydantic import BaseModel, Field

from typing import Literal

class ScoreBreakdown(BaseModel):

completeness: float = Field(ge=0, le=1)

correctness: float = Field(ge=0, le=1)

clarity: float = Field(ge=0, le=1)

depth: float = Field(ge=0, le=1)

actionability: float = Field(ge=0, le=1)

class Judgement(BaseModel):

score: float = Field(ge=0, le=1)

breakdown: ScoreBreakdown

decision: Literal["accept", "revise"]

reasoning: str

Now your judge isn’t just giving a vague score… it’s structured, explainable, and enforceable.

Acceptance Criteria (The Gate)

Once scoring is structured, your threshold becomes meaningful.

THRESHOLD = 0.8

Decision logic:

- Score ≥ threshold → accept

- Score < threshold → revise

You can even evolve this further:

- Require minimum scores per dimension

- Weight certain dimensions higher (e.g., correctness > clarity)

Preventing Infinite Loops

Here’s where production reality kicks in.

You need hard constraints:

MAX_ITERATIONS = 3

And ideally, convergence logic:

if iteration > 0 and judgement.score <= prev_score:

break

This ensures:

- No endless loops

- No token burn for marginal gains

- No illusion of improvement

Why This Matters

This is the real shift:

Without structure → subjective outputs

With scoring → measurable quality

You now have:

- Full visibility into why something was accepted or rejected

- The ability to track improvement across iterations

- A system that can be tuned, tested, and trusted

And most importantly:

You’re no longer shipping outputs because they sound good You’re shipping outputs because they meet a defined standard

That’s what turns a loop into a system.

The Final Layer

Orchestration Philosophy

Let’s address the obvious question.

If this is a “system,” shouldn’t you start with orchestration frameworks? Something like LangChain or CrewAI?

Short answer: no. At least, not in the beginning.

Because orchestration doesn’t fix bad thinking. It just scales it.

Why NOT Start with Frameworks

Frameworks are great at:

- wiring components together

- managing flows

- adding abstractions

But they don’t solve:

- weak critique

- poor remediation

- lack of scoring

- unclear contracts

If your core loop is broken, wrapping it in a framework just makes it harder to debug.

You’ll end up with:

- hidden state

- implicit transitions

- “why did this agent do that?” moments

Instead, start with something brutally simple:

- Plain Python

- Explicit function calls

- Clear state passing

- Logged outputs at every stage

Make the loop work end-to-end before you abstract it away.

Start Simple → Then Evolve

Your first version should feel almost boring:

deterministic flow → visible state → predictable behavior

Once that works, then you evolve.

When to Introduce Real Orchestration

Bring in heavier tools when you actually need them… not before.

For example:

- Async execution When stages are slow or independent

- Retries & fault tolerance When API failures become real

- Durable workflows When loops need to survive restarts

This is where something like Temporal starts making sense.

It gives you:

- state persistence

- retry policies

- long-running workflow support

Without forcing you to redesign your logic.

The Real Principle

First, design how your system thinks Then, design how your system runs

Most people do the opposite.

They start with orchestration, hoping it will create intelligence. It doesn’t.

The intelligence is in the loop:

- Generator

- Critic

- Judge

- Remediation

Orchestration just makes it scalable.

Here is a multistage(4) pipeline that has

- Data Collection

- Metrics Calculation

- Policy and Specialization Adherence

- Analysis and Reporting

If you get this order right:

- simple → correct → observable → scalable

you’ll avoid 90% of the pain people associate with “agent systems.”

👉 This aligns with your real-world approach.

Failure Modes (Let’s Not Pretend This Is Easy)

This is the part most blogs conveniently skip.

Because everything looks elegant… until it runs in the real world.

If you’re building with the RALPH Loop (or any agent system), here’s where things actually break:

Weak Critique → No Improvement

If your critic says:

“This could be improved”

You’ve already lost.

A soft critic produces vague feedback → vague remediation → same quality output.

Your loop runs… but nothing converges.

No Threshold → Infinite Loops

If “good enough” is not defined, your system will:

- either stop too early

- or never stop

Both are bad.

Without a threshold, you’re not iterating… you’re drifting.

Too Many Agents → Artificial Complexity

Adding more agents feels productive.

It isn’t.

You get:

- overlapping responsibilities

- conflicting outputs

- harder debugging

And somehow, worse results.

No Structure → Silent Chaos

String-in, string-out systems fail quietly.

- Critique is inconsistent

- Judge is subjective

- Remediation drifts

And you don’t know where things broke.

This is how “it worked yesterday” systems are born.

The Real World Hits Back (429s, 503s, and Reality)

Everything above assumes your system actually runs smoothly.

It won’t.

You’ll hit:

- 429 (rate limits)

- 503 (service unavailable)

- timeouts

- partial failures

And suddenly your beautiful loop is… fragile.

If you don’t handle this, your system doesn’t degrade… it collapses.

Retries Are Not Optional

You need:

- retries with limits

- exponential backoff

- idempotent calls (don’t double-process blindly)

Example mindset:

“Every agent call can fail. Design like it will.”

Exponential Backoff (Basic Pattern)

import time

import random

def retry_with_backoff(fn, max_retries=3, base_delay=1.0):

for attempt in range(max_retries):

try:

return fn()

except Exception as e:

if attempt == max_retries - 1:

raise e

delay = base_delay * (2 ** attempt) + random.uniform(0, 0.5)

time.sleep(delay)

This alone will save you from a lot of pain.

The Honest Take

Most failures won’t come from:

- bad models

- bad prompts

They’ll come from:

- weak system design

- lack of constraints

- ignoring real-world failures

Final Reality Check

A loop doesn’t guarantee improvement

A system doesn’t guarantee reliability

You earn both by:

- enforcing structure

- defining quality

- handling failure like an adult system

That’s the difference between:

- a cool demo

- and something that survives production

Real Use Case

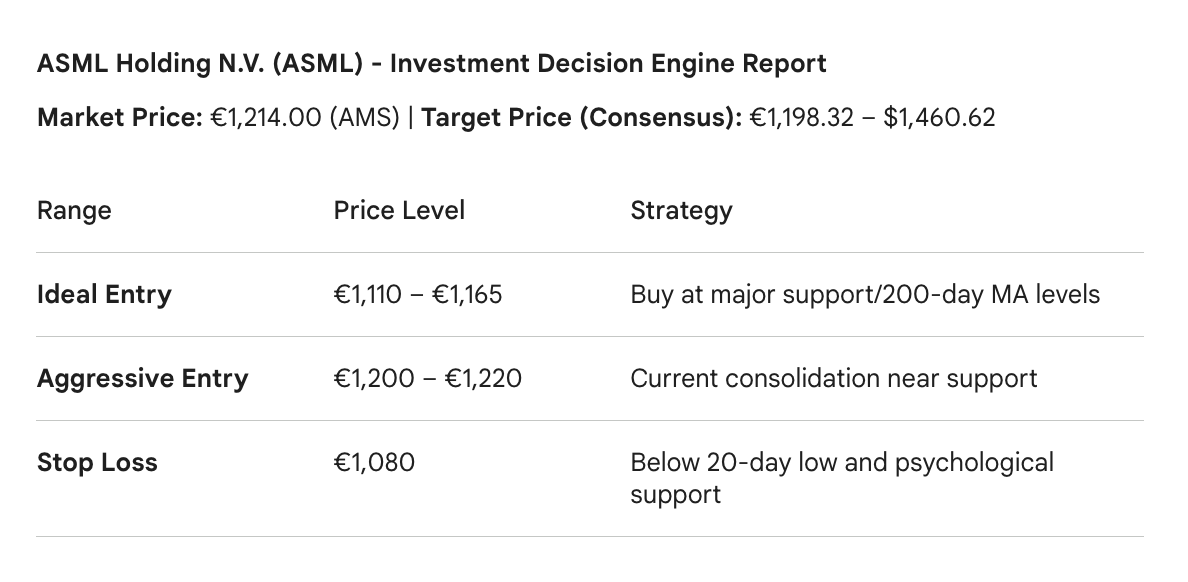

This approach is used in building a stock analyzer that helped make some money. & the stock is trading at $1427.02 as of 1st April, 2026

PS: Ignore the Euro symbol, it ain’t perfect yet.

Final Take

It’s tempting to believe that better AI systems come from adding more and more agents, more tools, more orchestration. It feels like progress. It looks like sophistication.

But most of the time, it’s just noise.

What actually moves the needle is much simpler, and much harder to get right:

How your system thinks.

Not once. But over time.

The RALPH Loop forces that shift. It takes you from:

- single-pass answers → iterative reasoning

- implicit behavior → explicit evaluation

- hopeful outputs → measured decisions

And once you build with that mindset, something changes.

You stop asking:

“Which model should I use?”

And start asking:

“How does my system improve its own answers?”

That’s a much more durable question.

Because models will change. APIs will evolve. New releases from Anthropic or anyone else will keep raising the ceiling.

But none of that matters if your system still settles for the first draft.

So don’t optimize for more components. Don’t chase complexity for its own sake.

Design how your system thinks, not how many components it has.

That’s where real leverage is.